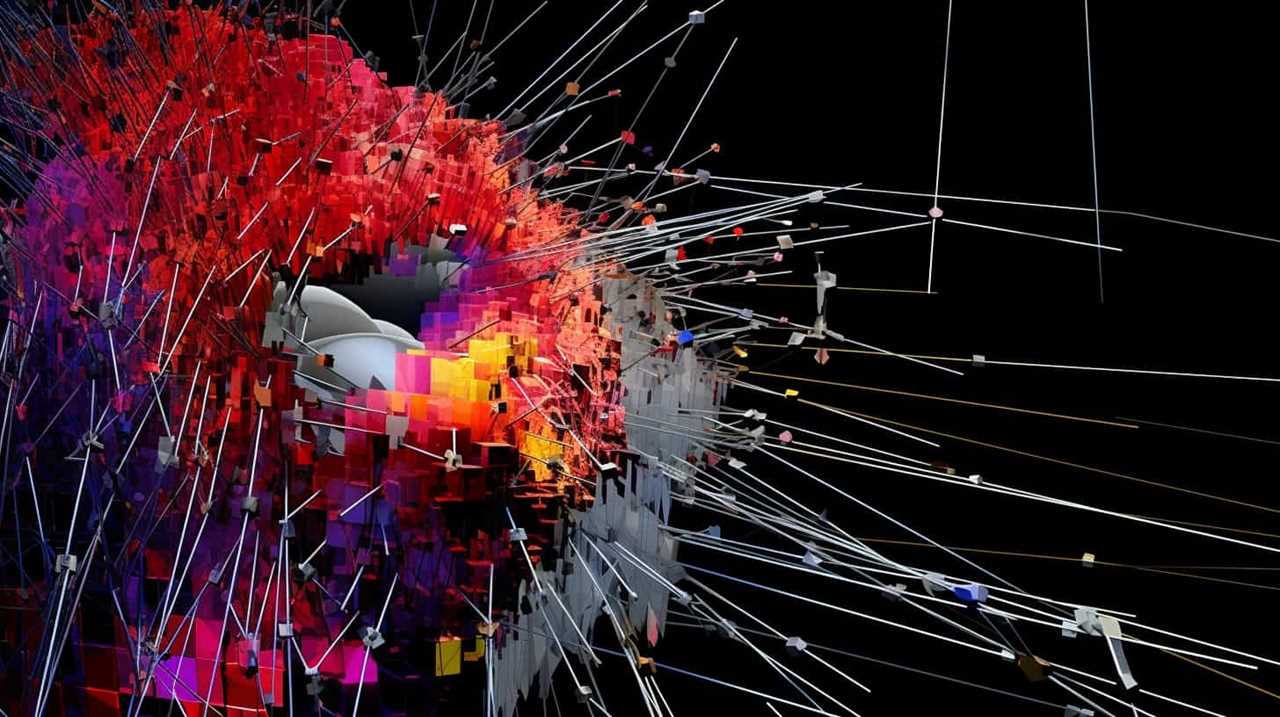

Understanding the Enemy: Adversarial Attacks

Adversarial attacks exploit weaknesses in AI models, posing risks to their capabilities and security. Researchers are on a mission to fortify our creations against these malicious intrusions.

Artificial Intelligence for Cybersecurity: Develop AI approaches to solve cybersecurity problems in your organization

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Types of Adversarial Attacks

Learn about the four common types of adversarial attacks, including Fast Gradient Sign Method and Universal adversarial perturbations, that threaten AI models.

Building and Training Generative AI Models: A Practical Guide to Generative AI Development and Scaling

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Detecting Adversarial Attacks

Discover how active detection techniques play a crucial role in identifying and mitigating potential threats posed by adversarial attacks on AI models.

Building Multi-Agent Systems on GCP: ADK, A2A & Agent Architectures (Intelligent Cloud Systems on GCP: Secure, Scalable & Multi-Agent AI Architectures)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Preventive Measures

Implementing techniques like adversarial training and input sanitization can proactively mitigate adversarial threats, enhancing the security and resilience of AI systems.

Learning Resources Create-A-Space Sanitizer Station

KEEP CLEANING SUPPLIES HANDY: Organize your cleaning supplies for cold and flu season!

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Enhancing AI Model Security

Evaluate the robustness of AI models and explore techniques like defensive distillation to strengthen their resilience against adversarial attacks.

Defending Against Adversarial Attacks

While no AI model is immune to adversarial attacks, implementing robust defenses can help fortify these systems against potential threats and minimize their impact.

Stay Informed

Continuously seeking information on training methods and defense strategies is crucial in fortifying AI models against evolving adversarial attacks.

In conclusion, safeguarding AI models against adversarial attacks is vital for ensuring their integrity and reliability in an increasingly digital world.