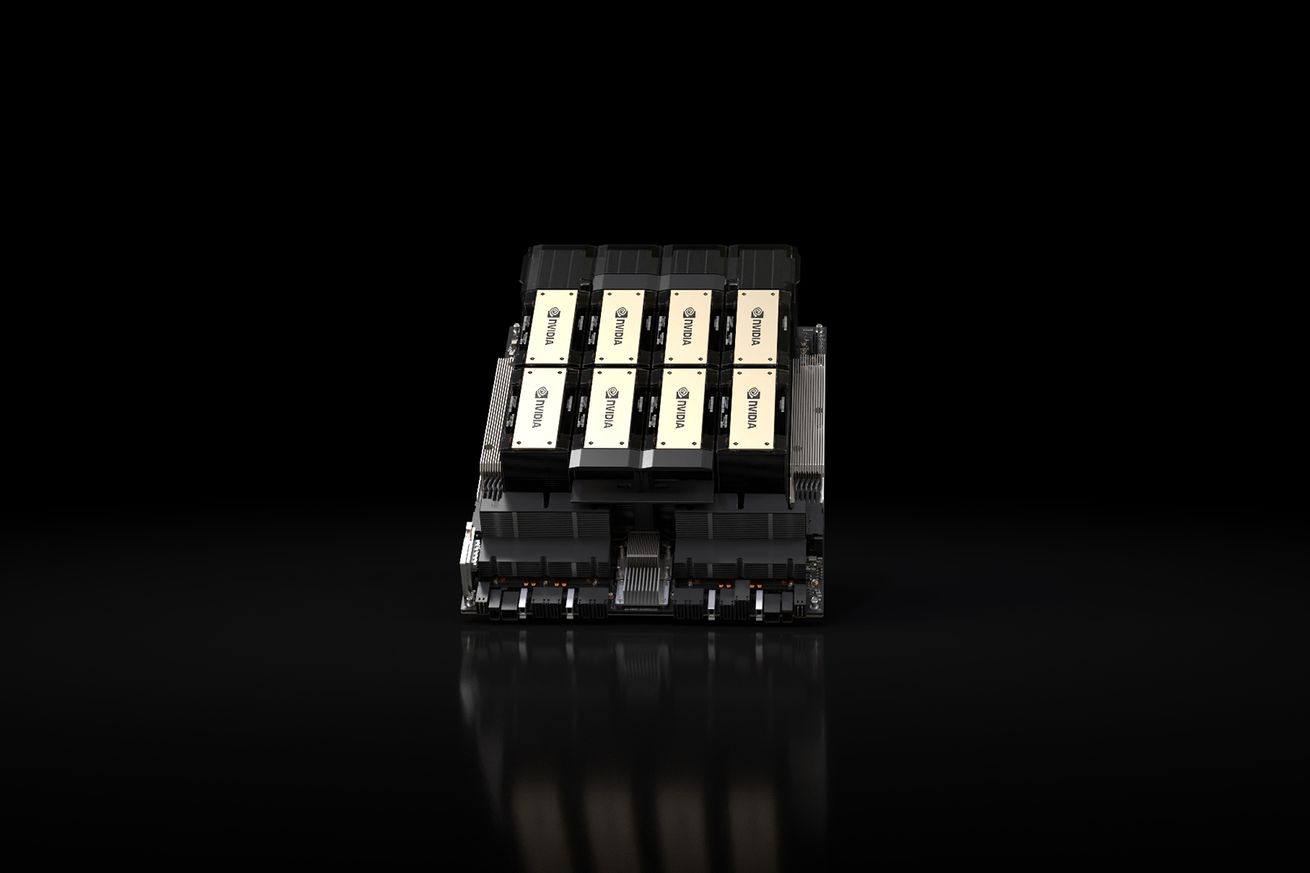

Nvidia’s New AI Chip: The HGX H200

Nvidia is gearing up to release the HGX H200, a state-of-the-art AI chip designed to enhance demanding generative AI tasks. The new GPU boasts improved memory capacity and bandwidth, promising accelerated performance for AI models and high-performance computing applications. The initial shipment is scheduled for the second quarter of 2024.

NVIDIA Tesla A100 Ampere 40 GB Graphics Processor Accelerator – PCIe 4.0 x16 – Dual Slot

Standard Memory: 40 GB

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Enhanced Memory Capabilities

The H200 features a new memory spec, HBM3e, boosting its memory bandwidth to 4.8 terabytes per second and increasing its total memory capacity to 141GB. These enhancements are expected to contribute to faster and more efficient AI processing.

ASRock Radeon AI PRO R9700 Creator 32GB Professional Graphics Card, 2920 MHz Boost Clock, 32GB GDDR6, AMD RDNA 4, AI Accelerators, DisplayPort 2.1a, PCIe 5.0, Blower Cooler

Professional AI & Creator Workstation: AMD Radeon AI PRO R9700 GPU with 32GB GDDR6 is engineered for AI…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Compatibility and Pricing

The H200 is designed to be compatible with systems supporting H100s, ensuring a smooth transition for cloud providers. Leading providers like Amazon, Google, Microsoft, and Oracle are expected to offer the H200 GPUs. While pricing details are undisclosed, the chips are anticipated to be on the higher end.

BestParts Mini 8 pin to mini 12+4 pin RTX 4090 4080 L20 L40 H100 GPU Power Cable Compatible with Dell PowerEdge R740 R740XD T550 T560 12.2inches

* mini 8pin to mini12Pin

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

High Demand for AI Chips

Nvidia’s announcement comes at a time when the demand for AI chips like the H100 is soaring. The scarcity of H100 chips has led to collaborative efforts and even using them as collateral for loans. With plans to triple H100 production in 2024, the demand for the new H200 chip is expected to be substantial.

Code: The Hidden Language of Computer Hardware and Software

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Future Prospects

The continuous evolution of AI technology, fueled by advancements like the HGX H200, promises to reshape industries and drive societal progress. Nvidia’s cutting-edge chip signifies a new era in AI processing capabilities, paving the way for innovative applications and solutions.